Tesla is creating San Francisco in a simulation to assist practice Autopilot/FSD

September 21, 2022

By Kevin Armstrong

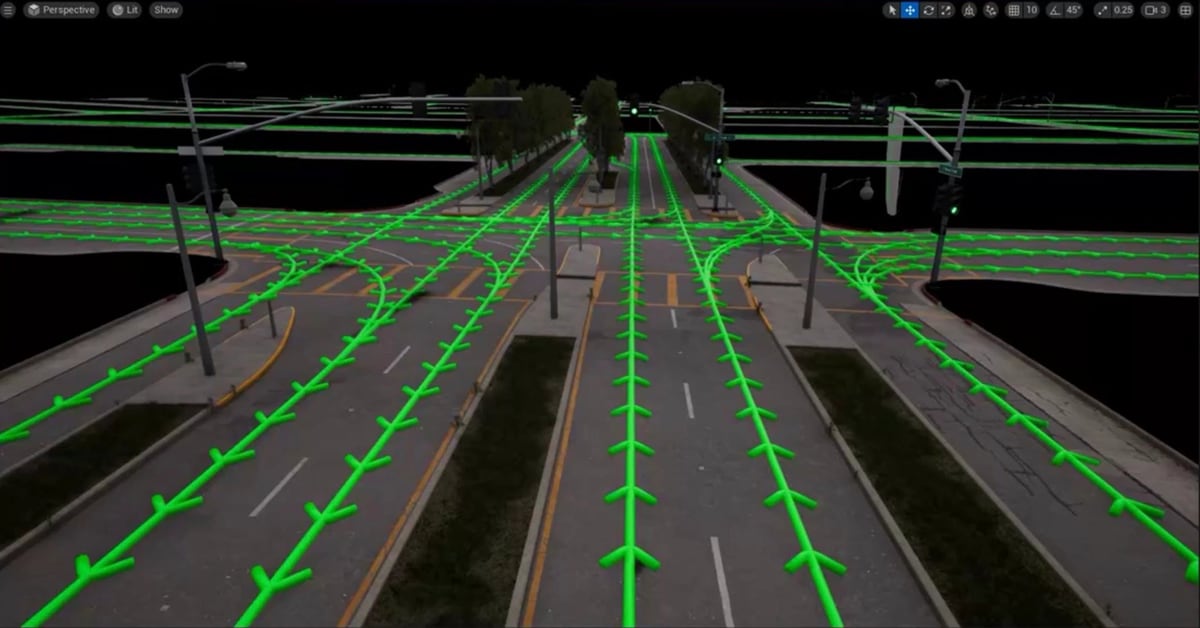

Tesla may be ramping up how it uses simulation to train its Autopilot system. A report by Electrek asserts that it has sources claiming that the company is concentrating on a reproduction of San Francisco. The article includes an image of the recreation and states that Tesla is working with Real Engine on its simulation.

According to Electrek, the image below is part of Tesla’s simulation of San Francisco.

Tesla gave the world a look at how it uses simulation to advance the Autopilot program during the first AI Day in August of 2021 (recap).

AI Day

At the first AI Day Tesla talked about the use of using simulations to help train Autopilot. The video below is cued up to where they discuss a simulation.

Ashok Elluswamy, the Director of the Autopilot Program, showed a video that, at first glance, looked real other than an appearance by a Cybertruck. “I may say so myself. It looks very pretty,” said Elluswamy. He explained that the company is investing heavily in using simulation. “It helps when data is difficult to source. As large as our fleet is (FSD Beta users), it can still be hard to get some crazy scenes,” the director explained while showing a rendering of two people and a dog running in the middle of a busy highway. “This is a rare scene, but it can happen, and Autopilot still needs to handle it when it happens,” said Elluswamy.

It appears that Tesla has jumped on Fortnite’s Battle Bus by teaming up with Epic Games and its development platform — Unreal Engine. Fortnite is one of the most popular games of all time, with 80 million subscribers and 4 million daily users, and it was created with Unreal Engine. Epic flexed its creative muscles when it gathered experts to create The Matrix Awakens: An Unreal Engine 5 Experience. The goal was to “blur the boundaries between cinematic and game, inviting us to ask — what is real?” The project spotlight on Unreal Engine shows just how incredibly realistic a simulation can be.

https://www.youtube.com/watch?v=WU0gvPcc3

After Elluswamy explained that the company is investing in simulation, it makes sense that Tesla would be hiring several positions with simulation in the job description. Electrek pointed out one posting for Autopilot Rendering Engineer. The posting states the successful candidate “will contribute to the development of Autopilot simulation by enabling and supporting the creation of photo-realistic 3D scenes that can accurately model the driving experience in a wide range of locales and conditions.” Tesla prefers the candidates to have experience working with Unreal Engine.

While not new, this does show that Tesla is doubling down on efforts to improve Autopilot. It has recently rolled out Full Self Driving to 60,000 more users, bringing the FSD Beta program to 160,000 in North America.

We can only guess how many thousands of simulations the Autopilot team is conducting to add to the data the Beta testers are collecting. It seems unlikely that Tesla has only created the City by the Bay in its simulations. Perhaps Elluswamy will show more renderings at the second AI Day on September 30th.

October 3, 2022

By Kevin Armstrong

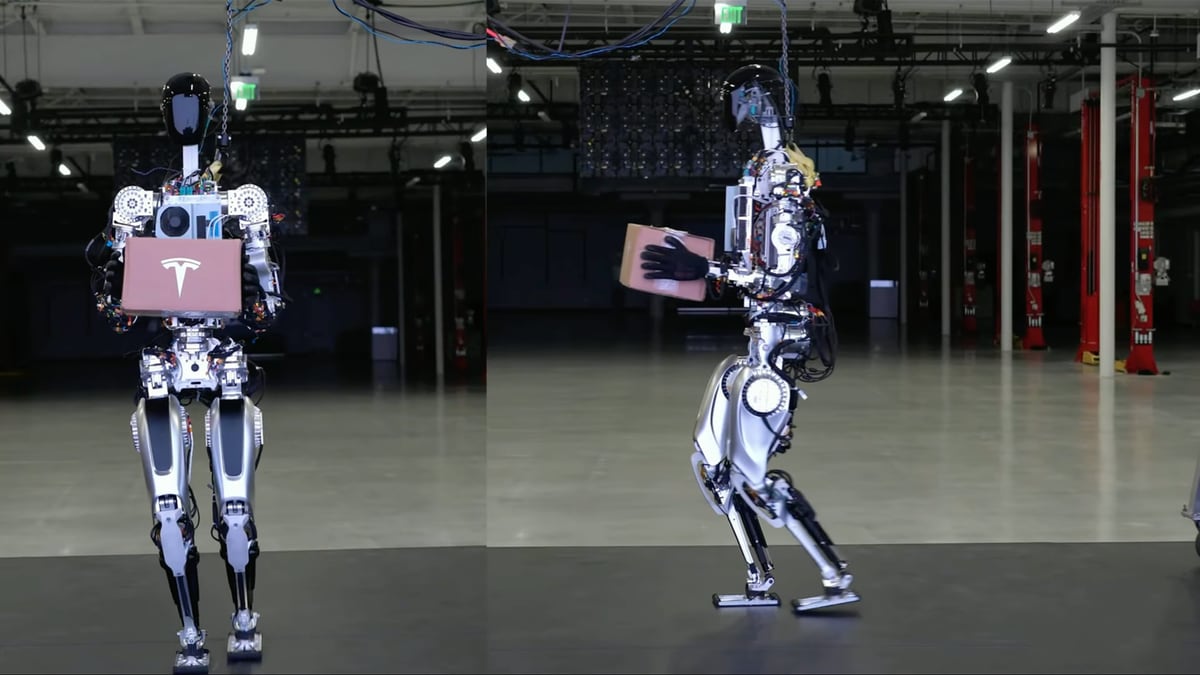

Elon Musk started Tesla’s AI Day 2022 by saying, “I want to set some expectations with respect to our Optimus Robot,” just before the doors opened behind him. A robot walked out, waved at the audience, and did a little dance. Admittedly a humble beginning, he explained, “the Robot can actually do a lot more than what we just showed you. We just didn’t want it to fall on its face.” Musk’s vision for the Tesla Robot, “Optimus is going to be incredible in five years, ten years mind-blowing.” The CEO said other technologies that have changed the world have plateaued; the Robot is just starting.

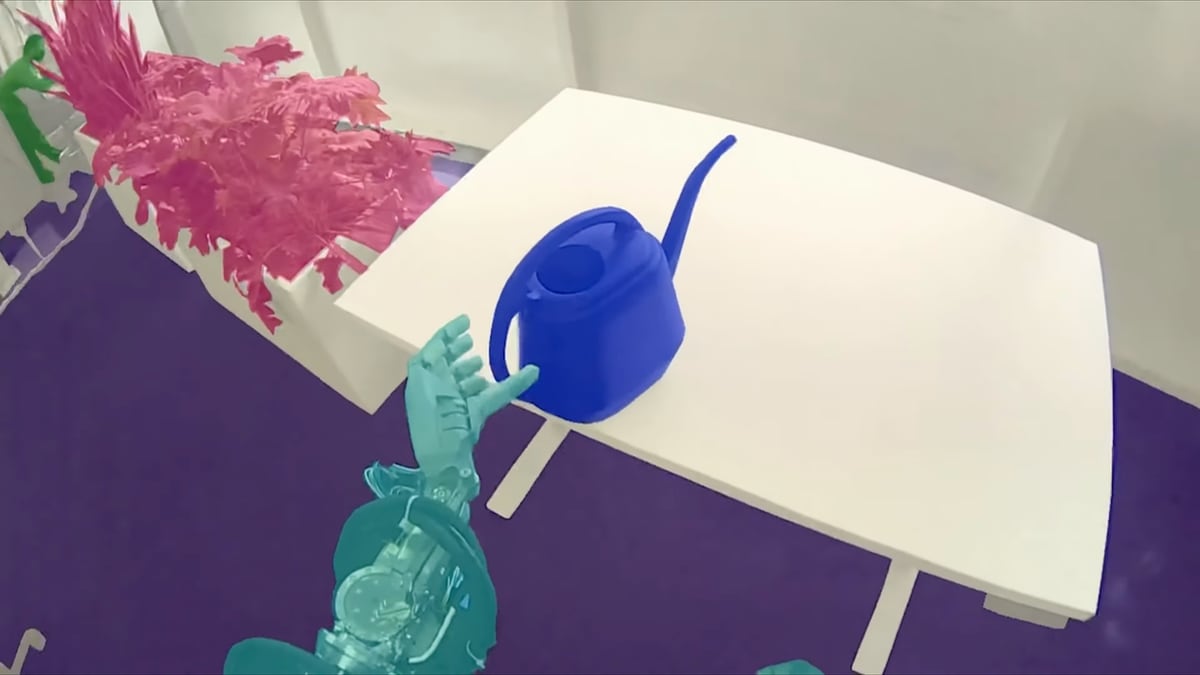

Tesla’s CEO envisions Optimus eventually being like Commander Data, the android from Star Trek the Next Generation, except it “would be programmed to be less robot-like and more friendly.” Undoubtedly there is a long way to go to achieve what Doctor Noonien Soong created in Star Trek TNG. What was demonstrated onstage wasn’t at that level, but several videos throughout the presentation highlighted what the Robot is capable of at its very early stage in development. The audience watched the robot pick up boxes, deliver packages, water plants and work at a station at the Tesla factory in Fremont.

Development over 8 months

The first Robot to take the stage at AI Day was not Optimus, but Bumble C, another acknowledgment to The Transformers, as Bumble Bee played a significant role in that franchise. However, Bumble C is far less advanced than Optimus, who did appear later but was on a cart.

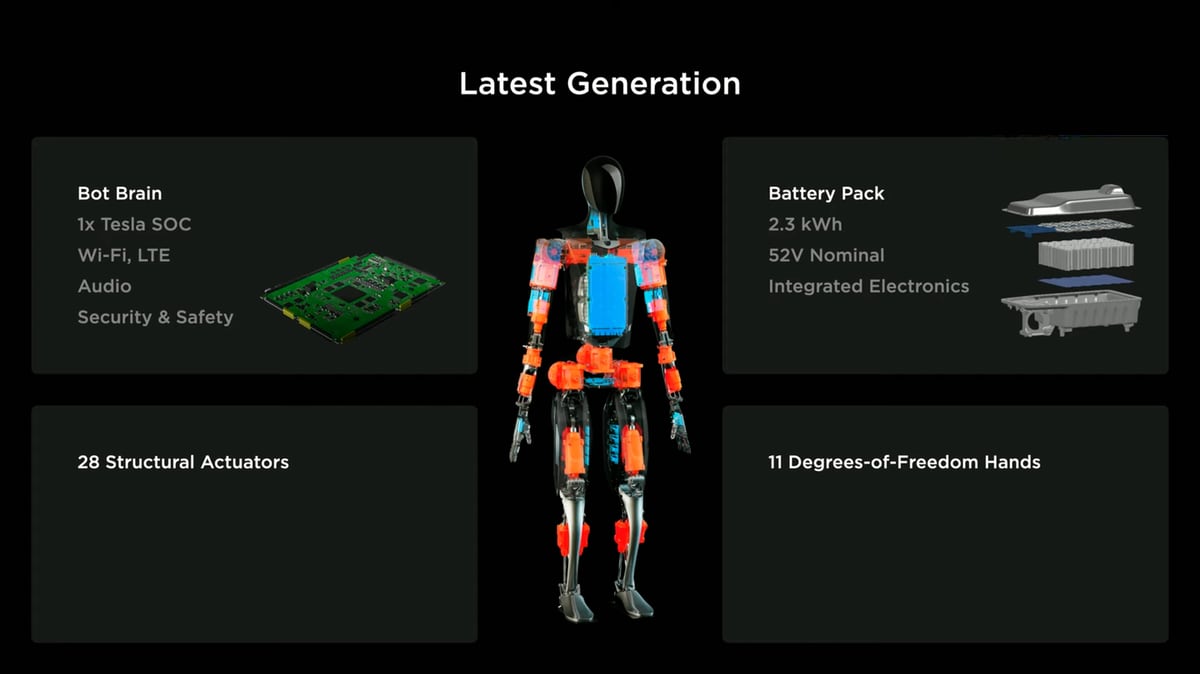

Several Tesla engineers took turns on the microphone describing some of the most complex elements of the project that was first announced one year ago. Perhaps the best description of the project was the company moving from building a robot on wheels to a robot on legs. However, that may be oversimplifying. For example, the car has two motors, and the robot has 28 actuators.

Overall design and battery life

Tesla’s brightest demonstrated how the production has come to life over the past eight months. It seems this group of computer masterminds had to become anatomist experts as Tesla took hints from the human body to create a humanoid robot. That is an essential factor in creating Optimus. Everything people interact with is made usable by a human, with two legs, two arms, ten fingers etc. If the robot differed from what the world is already designed for, everything would have to change. However, recreating the human body and its countless movements would take far too long, so Tesla has stripped it down to less than 30 core movements, not including the hand.

Like the human torso contains the heart, the robot’s chest holds the battery. It’s projected that a single charge would provide enough for a full day’s work with a 2.3-kilowatt-hour battery. All the battery electronics are integrated into a single printed circuit board within the pack. That technology keeps charge management and power distribution all in one place. Tesla used lessons learned from vehicle and energy production to create the battery allowing for streamlined manufacturing and simple and effective cooling methods.

Autopilot technology

Tesla showed what the Robot sees, and it looked very familiar. That’s because the neural networks are pulling directly from Autopilot. Training data had to be collected to show indoor settings and other products not used with the car. Engineers have trained neural networks to identify high-frequency features and key points within the robot’s camera streams, such as a charging station. Tesla has also been using the Autopilot simulator but has integrated it for use with the Robot programming.

The torso also contains the centralized computer that Tesla says will do everything a human brain does, such as processing vision data, making split-second decisions based on multi-sensory inputs and supporting communications. In addition, the robot is equipped with wireless connectivity and audio support. Yes, the robot is going to have conversations, “we really want to have fun, be utilitarian and also be a friend and hang out with you,” said Musk.

Motors Mimic Joints

The 28 actuators throughout the Robot’s frame are placed where many joints are in the human body. Just one of those actuators was shown lifting a half-tonne nine-foot concert grand piano. There have been thousands of test models run to show how each motor works with the other and how to effectively operate the most relevant actuators for a task. Even the act of walking takes several calculations that the robot must make in real-time, not only to perform but also appear natural. The robots will be programmed with a locomotion code; the desired path goes to the locomotion planner, which uses trajectories to state estimations, very similar to the human vestibular system.

Human hands can move 300 degrees per second and have tens of thousands of tactile sensors. Hands can manipulate anything in our daily lives, from bulky, heavy items to something delicate. Now Tesla is recreating that with Optimus. Six actuators and 11 degrees of freedom are incorporated into the robot hand. It has an in-hand controller that drives the fingers and receives sensory feedback. The fingers have metallic tendons to allow for flexibility and strength. The hands are being created to allow for a precision grip of small parts and tools.

Responsible Robot Safety

Musk wanted to start AI day with the epic opening scene from Terminator when a robot crushed a skull. He has heard the fears and people warning, “don’t go down the terminator path,” but the CEO said safety is a top priority. There are safeguards in place, including designs for a localized control ROM that would not be connected to the internet that can turn the robot off. He sees this as a stop button or remote control.

Musk said the development of Optimus may broaden Tesla’s mission statement to include “making the future awesome.” He believes the potential is not recognized by most, and it “really boggles the mind.” Musk said, “This means a future of abundance. There is no poverty. You can have whatever you want in terms of products and services. It really is a fundamental transformation of civilization as we know it.” All of this at a price predicted to be less than $20,000 USD.

Tesla Shows Off its First Robot at AI Day 2

September 30, 2022

By Nuno Christovao

Tesla is hosting its recruiting event, AI Day 2 tonight in Palo Alto, California.

Elon Musk said to expect a lot of technical detail and “cool” hardware demos. We don’t know which demos exactly, although Elon did say the event will be focused on AI and robotics.

Elon also talked about how these events are specifically aimed at showing off the exciting things Tesla is working on to attract more talent.

We can expect Tesla to show off its new Tesla bot, Optimus, talk about FSD and AI and possibly share some details on FSD hardware 4.0 and its upcoming Steam integration.

Pretty much. AI/robotics engineers who understand what problems need to be solved will like what they see.

— Elon Musk (@elonmusk) September 29, 2022

start time

The event took place in Palo Alto, CA on September 30th at 6:15 pm PT.

Watch on Demand

You can watch the event on demand below: